Secure Sensing and Learning (SSL) Research Lab

Research Overview

We are interested in the security and privacy in smart connected devices and in digital interactions. The overarching goal of our research group is to create secure machine learning models for existing and upcoming technologies for various local and online applications. Our group is building methodologies to ensure user security and privacy in IoT devices and in online communications. We apply secure machine learning models in the areas of wearable devices, biometrics, attack-averse authentication, and side channel attack formulation.

Active Projects

This research investigates active authentication for mixed reality systems using behavioral biometrics produced during natural in-headset interaction. MR devices are often shared across users, used in intermittent sessions, and built around continuous 3D interaction, which makes traditional authentication mechanisms difficult to apply without disrupting immersion. Passwords and virtual keyboards can break presence and remain vulnerable to observation, while one-time face or iris checks verify who unlocked the device but not necessarily who is currently wearing it or initiating a sensitive action. This research explores active authentication as a more usable and context-aware approach: verifying user identity from signals naturally generated during MR use, whether at session entry, continuously throughout interaction, or at security-sensitive moments such as payments, data access, or administrative actions.

The work treats behavioral signals from ordinary MR interaction, including hand and controller kinematics, eye-movement dynamics, and head-pose trajectories, as biometric sources for identity verification. Rather than focusing on a single modality, this research develops a broader authentication paradigm centered on domain-informed modeling, cross-session evaluation across days and months, and compact architectures suitable for deployment on mobile XR devices. By studying both the reliability and practicality of these behavioral cues, this research aims to make active authentication viable for real MR platforms while preserving usability, privacy, and immersion.

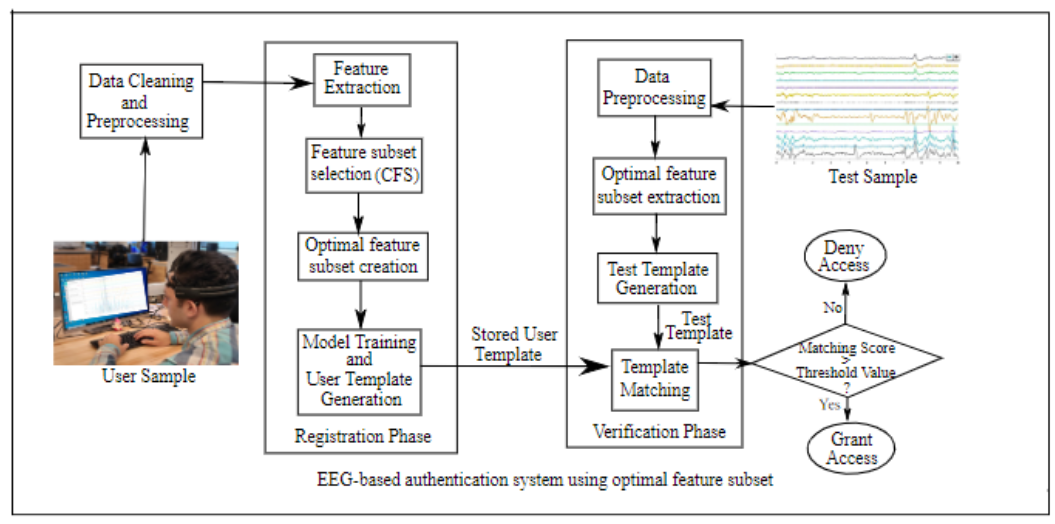

In the rapidly evolving realm of augmented reality (AR) and virtual reality (VR) systems, robust user authentication is vital due to the unique challenges posed by these immersive environments. Traditional authentication methods like PINs, passwords, facial recognition and fingerprints are proving inadequate and vulnerable to attacks. This vulnerability is further exacerbated by the nature of immersive environments, where users' attention is often diverted from external stimuli, making traditional authentication methods less reliable. Additionally, biometric methods face challenges such as susceptibility to presentation attacks, where adversaries attempt to deceive the system using fake biometric data, further emphasizing the necessity for advanced authentication mechanisms tailored specifically for AR and VR systems. This project aims to address these challenges by leveraging Electroencephalography (EEG) signals, which measure the electrical activity in a user's brain. The objective is to develop and revolutionize user authentication methods to both secure and enhance human-computer interaction within AR/VR environments. Additionally, the proposed effort includes educational activities for both undergraduate and graduate students; and activities for broadening participation in STEM fields. Through research, education, and outreach efforts, the project seeks to shape the trajectory of emerging technology toward a more secure and equitable digital landscape.

The goal of this work is to develop novel user authentication algorithms tailored specifically for AR/VR systems. The idea is to harness EEG signals of the user's brain to develop user authentication algorithms that are not only secure but also lightweight and user-friendly. By exploring how users' brains respond to various stimuli like visual cues or auditory prompts, the research seeks to create authentication methods that seamlessly integrate into the AR/VR experience. Through comprehensive analysis, including investigation into various attack models such as spoofing attacks, the project aims to ensure the robustness and reliability of authentication performance in real-world scenarios. Novel longitudinal studies for permanence and persistence analysis will be conducted to enhance the authentication system's effectiveness.

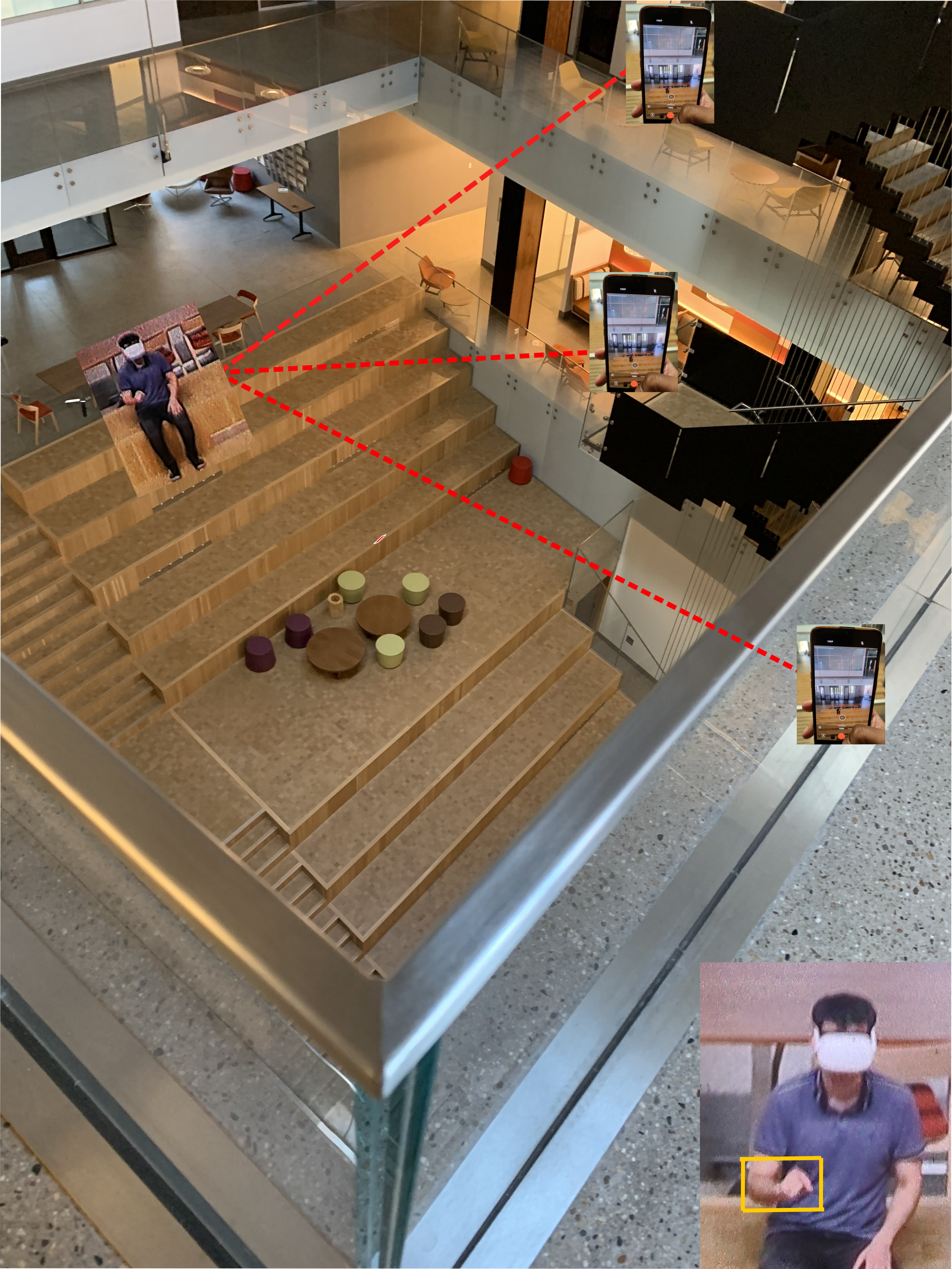

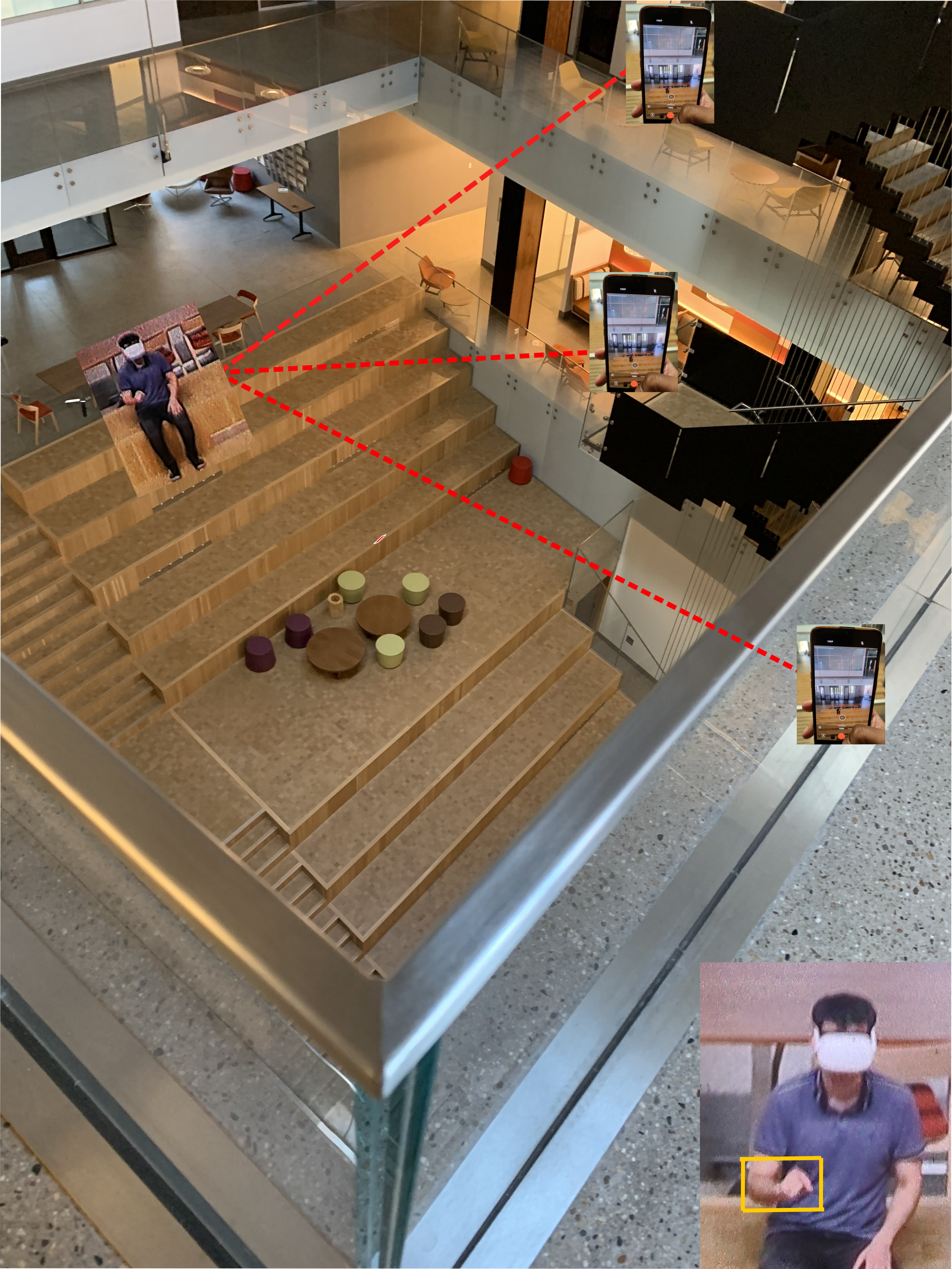

This research investigates how immersive computing systems unintentionally expose private user information through passive side channels in shared physical and virtual spaces. Rather than relying on device compromise, malware, network traffic, or direct sensor access, this work studies how observable signals produced during normal interaction, such as body motion, avatar animation, display behavior, and optical artifacts, can reveal sensitive information including user inputs, authentication behavior, speech content, application context, and on-screen data. The research develops realistic adversary models, end-to-end inference pipelines, and synthetic and real-world datasets across AR, VR, and MR platforms to characterize how these leakage channels emerge from the design of immersive interfaces and the physical properties of wearable displays. By combining computer vision, signal processing, and domain-adapted machine learning, this work shows how noisy and indirect observations can be transformed into meaningful private information. Ultimately, this research frames side-channel leakage as a systemic property of immersive interaction rather than an isolated vulnerability, with the goal of informing architectural, software-level, and user-interface defenses that reduce information exposure while preserving usability, realism, and social presence.

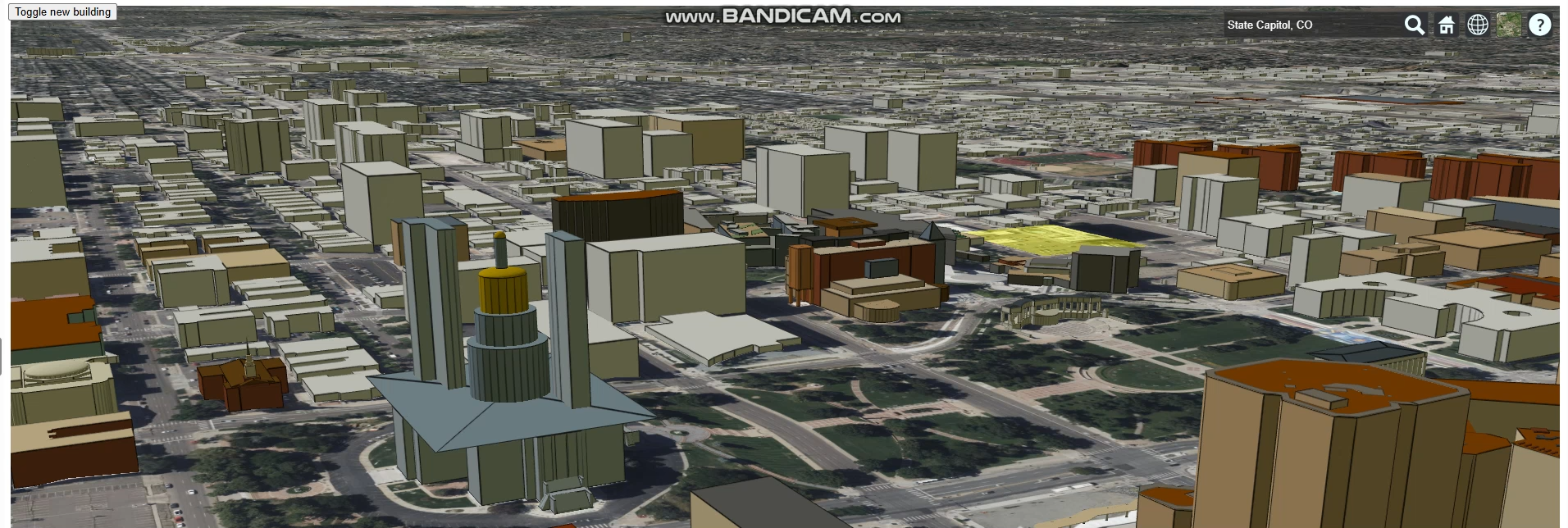

This research focuses on developing trustworthy digital twins for smart rural places by integrating artificial intelligence, computer vision, real-time sensing, and 3D geospatial modeling to create dynamic virtual representations of rural infrastructure and environments. Unlike urban settings, rural communities face unique challenges such as sparse data, limited sensing coverage, constrained computing resources, and extreme weather conditions that can make AI systems fragile. Our work addresses these challenges by designing digital twins that remain synchronized with the physical world, restore degraded visual information, forecast mobility and infrastructure behavior, and support resilient decision-making for rural communities.

This research investigates how Gaussian Splatting can be extended from a passive photorealistic rendering technique into an interactive scene representation for mixed reality and mobile XR. While Gaussian Splatting enables real-time, high-quality 3D scene reconstruction, conventional splat-based scenes remain largely unaware of objects, physical behavior, and user interaction. Each Gaussian represents visual appearance and spatial position, but not the semantic object it belongs to or how that object should respond when a user reaches out, moves it, or interacts with it. For immersive systems, this limitation makes reconstructed scenes visually realistic but functionally static, reducing presence and preventing natural interaction with the virtualized world.

This research direction develops object-decoupled, physics-aware, and interaction-ready Gaussian Splatting for mobile XR. The work focuses on scene representations that separate static background structure from individually addressable object-level splat sets, training methods that preserve this decoupled structure during optimization and fine-tuning, and runtime systems that support rigid-body physics, controller interaction, and headset-rate rendering on resource-constrained XR devices. By combining photorealistic reconstruction with object awareness and physical interactivity, this research aims to transform Gaussian Splatting from a frozen visual scene into a responsive mixed reality medium where users can pick up, move, and manipulate reconstructed objects naturally.

This research investigates how to improve the efficiency, reasoning ability, and reliability of multimodal foundation models, with a focus on vision–language models (VLMs). As these models are increasingly used for complex tasks involving visual understanding and natural language reasoning, key challenges remain in their computational efficiency, spatial reasoning capabilities, and susceptibility to hallucinations. This project addresses these challenges through a combination of model design, training-free inference methods, and mechanistic analysis of transformer architectures.

The work explores token-efficient multimodal architectures, such as Delta-LLaVA, that reduce computational overhead while maintaining strong visual–language performance. In parallel, it develops training-free segmentation and grounding approaches that leverage vision foundation models to improve spatial coherence and visual alignment without requiring additional model training. To better understand and mitigate unreliable outputs, the research also investigates the internal mechanisms of VLMs, analyzing how attention dynamics and intermediate representations influence grounding, reasoning, and hallucination behavior.

In addition to model and method development, this project contributes evaluation resources for multimodal reasoning, including new benchmarks such as MARS for studying spatial–symbolic mathematical reasoning in vision–language systems. By combining architectural innovation, interpretability-driven analysis, and targeted evaluation frameworks, this work aims to deepen our understanding of how multimodal models perceive, reason over, and compute with visual information while remaining computationally efficient and robust.

Computing Resources

Secure Sensing and Learning (SSL) Research Lab

SSL Research Lab is affiliated with Artificial Intelligence Lab and housed in Engineering Education and Research Building (EERB) at the University of Wyoming. The research lab houses several wearable devices to study user interactions and behavior with the devices. The wearable research devices at SSL research lab include:

- Motion Sensors - 12 wrist band kits with MetaMotionS+ Sensors.

- Mixed Realty Systems - two Apple Vision Pro headsets, two Magic Leap 2 headsets, HoloLens 2, Oculus Quest 2 (64GB), HTC Vive Focus 3 headset, Samsung Gear VR, DESTEK V5 VR headset.

- Hand-held smart wearables - 2 Samsung Galaxy S20 5G, 1 Apple iPhone 11 4G, 6 Apple iPhone SE (2nd generation), five Samsung Galaxy XCover Pro.

- Brain Signals for Biometrics Analysis - mBrainTrain Smarting Pro EEG system, Wearable Sensing DSI-24 ssytem, Wearable Sensing DSI-Flex system, CREMedical tEEG system, Zeto Inc. EEG System, Emotiv Epoc+ headset, 2 Emotiv EpocX headset, 2 EMOTIV Insight 5 Channel Mobile Brainwear®, Muse S headset.

- Side Channel Analysis - 2 Mansoon Power Monitors, Fluke 117 True RMS Multimeter.

- Video Tracking - 4 Azure Kinect DK system, two Cannon P950 cameras.

Artificial Intelligence Lab

Artificial Intelligence Lab is housed in Engineering Education and Research Building (EERB) at the University of Wyoming. The AI lab houses multiple HPC machines for data processing and analysis.

- One Intel Core i9-10940X Deep Learning Workstation with 4 RTX 6000 GPUs from SabrePC.

- One AMD Threadripper 3975WX:32 cores, Deep Learning Workstation with 3 RTX A6000 GPUs (NVLinked).

- One AMD Threadripper 3975WX:32 cores, Deep Learning Workstation with 2 RTX A6000 GPUs (NVLinked).

Advanced Research Computing Center

The Advanced Research Computing Center (ARCC) at the University of Wyoming provides access to computational and storage resources; the Teton high-performance computing system and the petaLibrary storage system.

The Teton cluster provides more than 15,000 CPU cores across more than 500 nodes with a total of more than 80 TB of memory. Regular nodes have 32-40 cores and 128 GB of RAM, high-memory nodes have up to 1 TB of RAM, and Knights Landing nodes provide 72 cores per machine with 400 GB of RAM. Approximately half of the nodes have local SSD storage of up to 7 TB capacity. The GPUs available on the cluster are NVIDIA P100 16G, NVIDIA V100 16G, NVIDIA V100 32G, NVIDIA K20, NVIDIA K20x, NVIDIA K40, NVIDIA K80, NVIDIA GTX Titan, and NVIDIA GTX Titan X. The petaLibrary storage system is connected to the UW network backbone at 40 Gbps (upgradeable to 80 Gbps), which is connected to the Internet2 research network at 100 Gbps. It is accessible through the SMB protocol from on-campus networks and Globus from off-campus networks.

NCAR Wyoming Supercomputing Center

The NCAR Wyoming Supercomputing Center (NWSC) houses the Cheyenne supercomputer, a state-of-the-art, 5.34-petaflop supercomputer, in the specially built computational facility near Cheyenne, Wyoming.

Cheyenne is an HPE ICE XA cluster with 145,152 latest-generation Intel Xeon processor cores in 4,032 dual-socket nodes (36 cores/node) and 313 TB (terabytes) of total memory. Cheyenne's login nodes give users access to the GLADE shared-disk resource and the Campaign Storage system. The GLADE system (also referred to as the Globally Accessible Data Environment) has a total usable capacity of 38 PB (petabytes) and has a maximum bandwidth of 200 GBps (gigabytes per second) to Cheyenne.

Tools and Research Approach

We use methods and approaches drawn from probability theory, statistical learning, and game theory, etc.